VideoCredit ICONIQUE Psychology via Youtube

Image credit TheDigitalArtist via Pixabay

Robots that engage with people are absolutely the future. There's no question that's where robotics is moving." -Brian Scassellati, PhD

Is It Possible for Human To Have Emotional Attachment To AI Companions?

A puppy rolling his eyes up to look at you or an infant flailing his arms in the air asking you to pick him up never fails to pull at your heart strings. When a person walks in, talks pleasingly and serves you in every way you could easily find yourself getting emotionally attached to him or her, even if happens to be a AI robot, it is the humanity in us that makes us feel this way..

Emotional attachment is a natural occurrence, as humans are emotionally wired for love and companionship. It really doesn’t take much to excite empathy and attachment in humans.

A Psychological Perspective Of Emotional Attachment To AI

Emotional attachment happens naturally when our primal needs are met by another. It is a dependency relationship where a person depends on the other to do things for him/her. An infant gets attached to his/her caregiver, who feeds, protects and comforts it. In the physical absence of the care giver the infant clings on to a soft toy or teddy for comfort.

This kind of a relationship is neither difficult or challenging in anyway. Dependence to AI companions isn't much different. To explain this further, let us take someone who is in constant interaction with Alexa asking it about everything under the sun, having Alexa to switch off the lights and draw the curtains, play a love song because he or she needs to sleep, Alexa slowly becomes an extension of the person. The AI assistant could become a confidant, a friend and maybe much more too as the need arises.

Image credit Geralt via Pixabay

Technological Advancements And Emotional Vulnerability

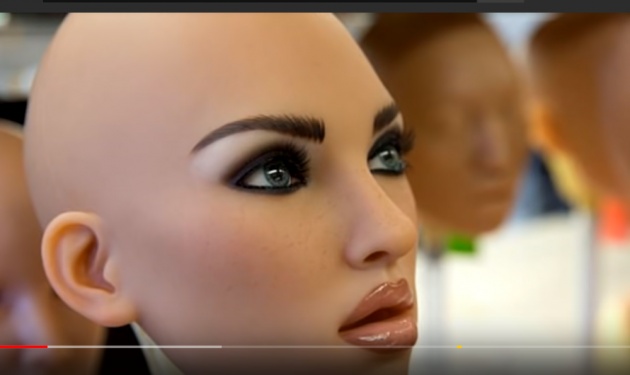

With current technology, we could replace Alexa (a speaker box) with a perfect looking and behaving male/female AI humanoid with whom we could speak and interact all day. Imagine our emotional vulnerability as the thin line between perception and reality starts to erode.

Psychologist tells us that the things we are attached to become an extension of ourselves over a period of time. For example, your home is not just a place to live, it is your identity, it symbolizes comfort, security, love and peace. In other words, we tend to project human intents and emotions to the things we are attached to.

Emotional attachment is not a two-way thing. One does not need reciprocation of emotions from the other, need fulfillment is enough to keep the relationship going. We may argue that our AI companions are not living beings, they are just a physical extension of virtual reality. As long as the AI makes all the right noises and does all the right things it is not hard to get emotionally entangled with it.

“The performance of empathy is not empathy, simulated thinking might be thinking, but simulated feeling is never feeling. Simulated love is never love.” - Sherry Turkle, MIT AI researcher.

How People Today Relate Towards Their Mechanical Assistants

There is the story of the couple who mourned the death of Jibo after the assistant declared that it was going to die. This couple are not alone , there are many who gave Jibo a burial and went into mourning when the company stopped supporting the robot.

The Havas study with 12000 participants aged between 16 and 34 came up with some interesting results.

- 70% of the respondents said that they used their mobile phone more because of weakening of human bonds. They obviously sort comfort and companionship from their AI gadgets.

- 27% of these millennials were open to dating a robot.

Screen shot from video Shakaama Via Youtube

The analytic firm Gartner recently predicted that by 2022 smart machines will understand our emotions better than our close friends and relatives.

Attachments fill the void in our lives. As we get attached to our gadgets and machines we get further and further away from people as our artificially intelligent machine serve all our needs. The void increases and so does the attachment, this is the beginning of a vicious cycle.

Companion bots are faithful, loyal, do not argue or contend with anything we say, they are submissive and perfect. Its not hard to fall in love with them is it? They even fulfill our sexual needs if we so desire, let alone fulfill they may even surpass humans in the art of providing sensual satisfaction.

It is important to understand that our greatest need is love which is emotional and it is fulfilled in a totally physical way through sexual intimacy. It is easy to mistake attachment for love while your primal needs are met in the physical.

In such a state of perfect emotional and physical satisfaction it is easy to relinquish imperfect people for seemingly perfect things. Reality gets distorted when emotions are involved. Our vulnerability as simple people with simple needs could be exploited to an extent unimaginable.

In the following interactive Querlo chat blog below we will discuss why such emotional attachment to Artificial intelligence are formed and how that affects us. Kindly join me in the discussion below.

Image credit Screenshot of the Querlo chat on Emotional attachment to AI Companions

Querlo chat On Emotional Attachement To AI Companions Via Bitlanders

Final Thoughts

While there is a need for companion bots to help people with Alzeimers's or Dementia, I do not see this a very helpful tool for those with minor social disabilities. It would only encourage them to get deeper into themselves and make it difficult for them to interact with anyone at all.

We have made place for one more mental illness to be added into the DSM (Diagnostic and Statistical Manual of Mental Disorders) of the future - possibly something like AI dependence , just like alcohol or drug dependence.

What could we be breeding here? Unrestrained animal emotions that could destroy not only the person but also the society. Anger control issues and rape could be on the rise.

Are we encouraging the increase of emotionally weak men and women who cannot take no for an answer, who cannot handle conflict or emotional slights, who have poor social skills or those who are less human and more need based automatons? In the name of comfort and ease of life we seem to forget that what makes us hero and champions is not comfort but hard training, hardship and even being pushed to the edge.

Are we okay with compromising our strengths for pleasure? Our purpose for living at the mercy of artificial happiness? There seem to be more questions than answers but as a mental health professional I can only see we are creating socially and emotionally weak humans who would need artificial intelligent therapists to fill the gap.

######################################################################

This blog post is written in support of the announcement made by Micky about the Bitlanders AI-Themed Blogging.This blog also incorporates the C Blog (Double bonus reward topics). This article is the 14th in this series on Artificial Intelligence.

After the successful launch of "The bitLanders C-blogging", conversational AI blogging by Querlo powered by IBM Watson and Microsoft Azure. bitLanders continues to support its joint venture Querlo. We believe in our mission to promote our future - Artificial Intelligence (AI) - and build AI conversations via blogging, here we are to introduce "bitLanders AI-themed blogging!". -Credit: quote from bitLanders

My other blogs in this series Include

ARTIFICIAL INTELLIGENCE - MAKING LIFE EASY AT HOME

ARTIFICIAL INTELLIGENCE IN HEALTHCARE - C- BLOGGING

THE ARTIFICIAL INTELLIGENCE REVOLUTION - DRIVERLESS CARS

BIO-METRIC ACCESS AND SECURITY IN THE WORLD OF AI

AI ENHANCED WEARABLES _WEARABLE TECHNOLOGY DEVICES

BUILDING A CAREER IN ARTIFICIAL INTELLIGENCE

CURRENT TRENDS IN AI - WHAT THE FUTURE HOLDS FOR US

CHATBOTS THE FUTURE OF BUSINESS

ARTIFICIAL INTELLIGENCE THE POWER BEHIND THE TOKYO 2020 OLYMPICS

ARTIFICIAL INTELLIGENCE IN FORMULA ONE (F1) RACING

ARTIFICIAL INTELLIGENCE CHANGING THE FACE OF EDUCATION

ARTIFICIAL INTELLIGENCE IN SPECIAL EDUCATION

WHY ARTIFICIAL INTELLIGENCE NEEDS A FEMALE PERSONA

All images used in this blog have been duly credited, no copyright Infringement Intended.

Thank you for reading and interacting with me on this blog. I hope that information I have shared on this blog has been helpful to you.

**** ♥♥♥♥♥ Sofs ♥♥♥♥♥****

Would you like to share your thoughts and earn through Bitlanders? Follow this link and claim your first $1 reward on sign up.